Published by Easy Semiconductor Technology (Hong Kong) LimitedIn modern industrial environments, control system downtime is one of the most critical risks a chemical plant can face. Even a short interruption in Distributed Control Systems (DCS) or PLC networks can lead to production loss, safety hazards, and significant financial impact. This case study details how a full-scale chemical plant control system failure was diagnosed, contained, and fully restored within 48 hours by the engineering team of Easy Semiconductor Technology (Hong Kong) Limited.

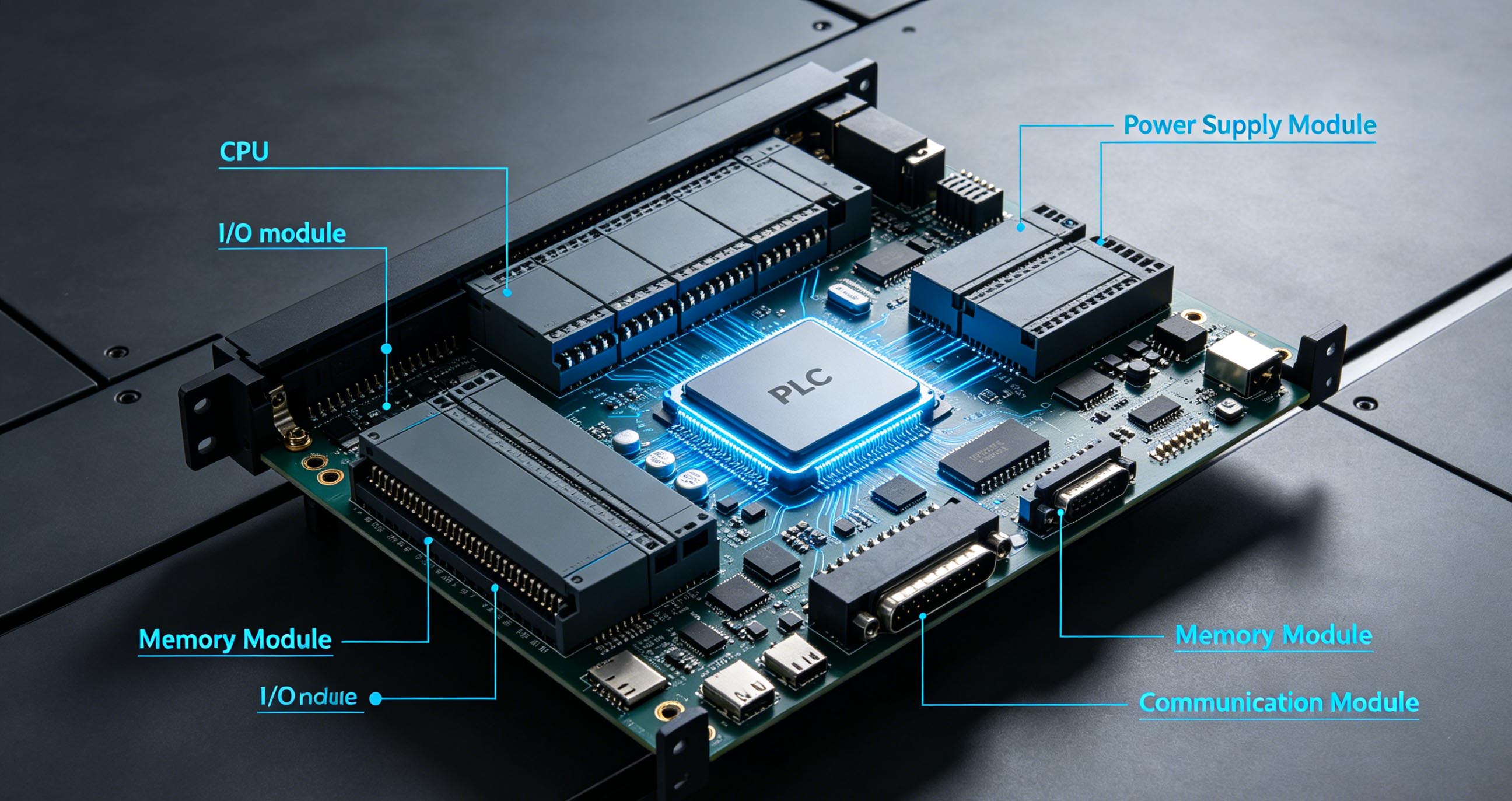

The incident occurred at a mid-sized chemical processing facility operating 24/7 production lines for specialty industrial solvents. The plant relied on a hybrid automation architecture combining PLC-based field control and a centralized DCS for process monitoring, alarm handling, and safety interlocks.

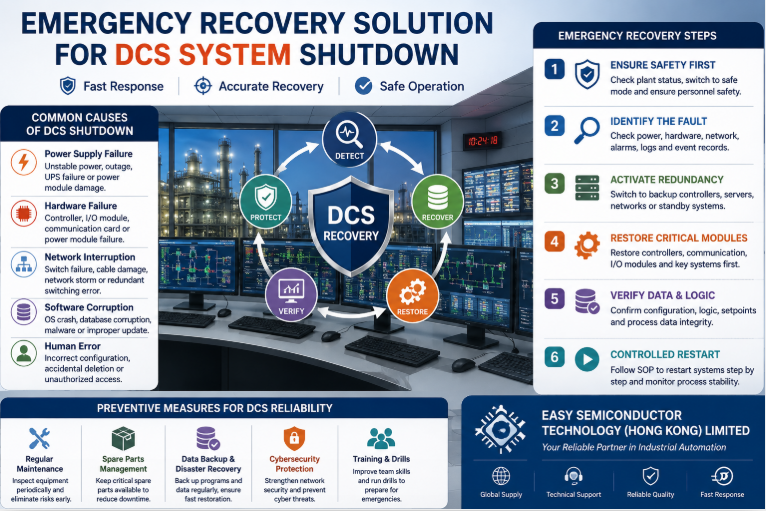

At approximately 02:15 AM, the plant experienced a sudden and widespread control system disruption. Operators reported loss of communication between the DCS master station and multiple remote I/O modules. Within minutes, several process loops entered fail-safe mode, forcing partial shutdown of key production units.

Initial alarms indicated:

Loss of redundant controller synchronization

Communication timeout across fieldbus networks

Abnormal restart loops in multiple PLC stations

HMI system freezing and data logging failure

The plant’s emergency response team immediately initiated a controlled shutdown of affected units to prevent process instability.

Easy Semiconductor Technology (Hong Kong) Limited was contacted at 03:10 AM under an emergency industrial support contract. A specialized response team was mobilized within 30 minutes, consisting of control system engineers, network diagnostics experts, and process automation specialists.

The first objective was to stabilize the system environment and prevent cascading failures across interconnected subsystems.

Upon arrival at the site, engineers initiated a structured triage process:

Isolation of affected network segments

Verification of controller power integrity

Backup validation of PLC and DCS configuration files

Assessment of communication backbone (Ethernet/IP and Modbus TCP layers)

Within the first 12 hours, the engineering team identified the root cause as a multi-layer failure triggered by a corrupted firmware update in a core communication gateway module. The corrupted firmware caused intermittent packet loss, leading to synchronization failure between redundant DCS servers.

Compounding the issue, an automatic failover mechanism repeatedly switched control authority between primary and secondary controllers, creating a loop condition that destabilized the entire supervisory network.

Secondary contributing factors included:

Outdated firmware on legacy PLC nodes

Inconsistent time synchronization across control layers

Insufficient segmentation between safety and process networks

This combination of issues led to a system-wide communication breakdown rather than a localized fault.

Once the root cause was confirmed, the recovery process was divided into four parallel workstreams:

1. System Containment

Network engineers isolated the faulty gateway and disabled automatic failover switching. This immediately stabilized the DCS communication layer and prevented further controller oscillation.

2. Data Preservation

All process data logs, historian databases, and configuration files were backed up to secure offline storage. This ensured no loss of production history or recipe data.

3. Firmware Restoration

The corrupted gateway firmware was replaced with a verified stable version. Simultaneously, firmware consistency checks were performed across all PLC devices.

4. Network Reconfiguration

Engineers restructured the control network topology, introducing stricter segmentation between:

Safety Instrumented Systems (SIS)

Process Control Networks (PCN)

Supervisory Control Networks (SCADA/DCS)

This improved fault isolation and reduced the risk of future cross-system interference.

After restoration procedures were completed, a comprehensive validation process was initiated.

Testing phases included:

Redundant controller failover simulation

Communication stress testing under peak load

Sensor-to-actuator response verification

Alarm and interlock system integrity checks

Full loop tuning recalibration for critical process units

All systems passed stability benchmarks within defined tolerance thresholds. Engineers also monitored the plant under controlled restart conditions to ensure long-term reliability.

By the end of the 48-hour emergency window, the plant successfully resumed full production operations. All process units were brought back online in a staged sequence to avoid hydraulic and thermal stress.

Key achievements included:

100% restoration of DCS and PLC communication

Zero data loss across historian systems

Full recovery of safety interlock functionality

Stable redundant controller synchronization

Normalization of production throughput

Plant operators confirmed stable performance within the first production cycle following restart.

This incident highlighted several important lessons for modern industrial automation environments:

1. Firmware Management is Critical

Even minor inconsistencies in firmware versions across devices can lead to system-wide instability in tightly integrated architectures.

2. Network Segmentation Improves Resilience

Separating safety, control, and monitoring networks significantly reduces the risk of cascading failures.

3. Redundancy Requires Proper Configuration

Redundant systems must be carefully tuned to avoid conflict loops during failover events.

4. Emergency Preparedness Reduces Downtime

Having pre-defined recovery procedures and access to expert response teams can dramatically reduce recovery time.

The successful restoration of the chemical plant control system within 48 hours demonstrates the importance of rapid response capability, structured diagnostics, and robust automation design. Through coordinated engineering efforts and systematic troubleshooting, Easy Semiconductor Technology (Hong Kong) Limited ensured that production was safely restored with improved system resilience.

This case reinforces a key principle of modern industrial automation: downtime is inevitable, but recovery speed and system design determine the real cost of failure.